AI is changing the role of the developer, shifting work from coding toward agent orchestration and higher-level architecture. While not the only area seeing results, developer productivity is where AI’s traction is most visible and measurable, particularly among individual developers, small teams, and greenfield shops free of legacy systems and layered processes.

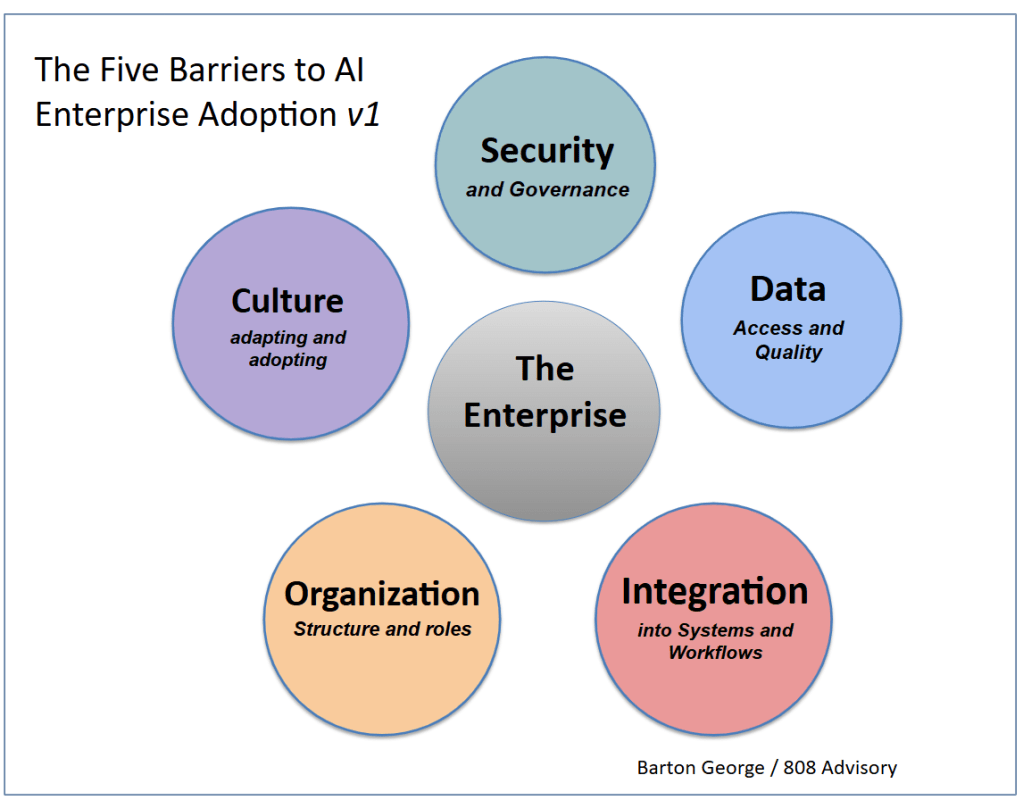

Enterprise adoption is different. Scaling those results, and those from other use cases, beyond pilots and isolated efforts requires addressing five key barriers. Those that do will pull ahead. Those that don’t will find themselves increasingly outpaced by peers that did.

Note: this is v1, any and all feedback welcome

The Five Barriers

- Security and Governance If security and governance are not solved for, AI not only fails to reach its full potential but becomes a liability, opening the door to breaches, unauthorized actions, and compliance failures. In the agentic era, traditional enterprise security models that assume human decision checkpoints no longer apply. Agents act autonomously, at machine speed and at scale. They require a governance layer between agents and infrastructure to enforce policies, monitor behavior, and audit actions.

- Data Access and Quality AI utility is directly proportional to the quality and accessibility of internal data. In large enterprises, that data is scattered across disconnected databases and geographies, and without deliberate efforts to unify, clean, and make it accessible, AI remains narrow and underpowered. If you don’t have a data strategy, you don’t have an AI strategy.

- Integration into Systems and Workflows For AI to succeed beyond individual tooling, it needs to be brought in-process and woven into existing software, workflows, and decision points, a non-trivial exercise in large enterprises with decades of legacy tooling. The challenge is less about deploying models and more about reworking how work flows through the organization.

- Organizational Structure and Roles To stay competitive, enterprises will need to rethink how they are organized, what teams and roles are needed, and what skills are required. This includes changes to how developers, operations, and business stakeholders interact and make decisions. While important when leveraging AI for efficiency, it becomes essential when the goal is discovering and developing new opportunities, which requires reinventing both business models and the structures that support them.

- Culture: Adapting and Adopting Adoption is constrained as much by culture as by technology. Whether employees embrace AI depends heavily on whether leadership creates an environment that encourages and supports its use, through clear communication, visible commitment, and concrete examples of what good looks like. Without that, adoption stays inconsistent and localized. Equally important is transparency around how roles will evolve. When employees are left to fill in the blanks themselves, anxiety around job security sets in, and that anxiety can paralyze large parts of the organization. While many see AI as an opportunity, there are many who, without context or clear expectations, see it as a threat.

Pau for now…

Posted by Barton George

Posted by Barton George